A Robust Interactive Facial Animation Editing System

Abstract

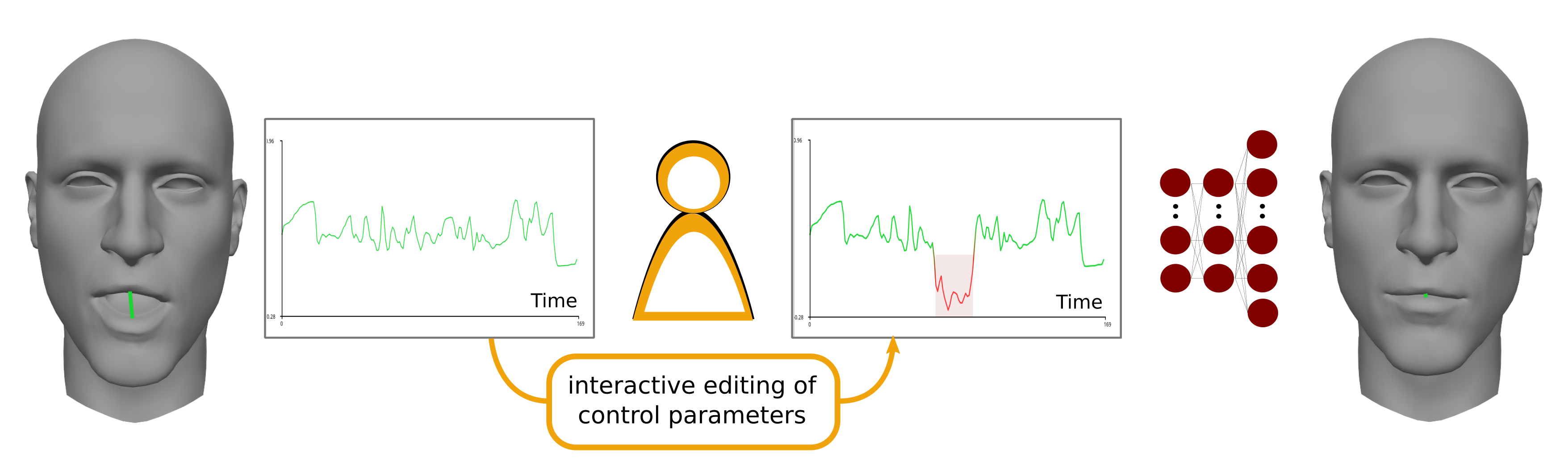

Over the past few years, the automatic generation of facial animation for virtual characters has garnered interest among the animation research and industry communities. Recent research contributions leverage machine-learning approaches to enable impressive capabilities at generating plausible facial animation from audio and/or video signals. However, these approaches do not address the problem of animation edition, meaning the need for correcting an unsatisfactory baseline animation or modifying the animation content itself. In facial animation pipelines, the process of editing an existing animation is just as important and time-consuming as producing a baseline. In this work, we propose a new learning-based approach to easily edit a facial animation from a set of intuitive control parameters. To cope with high-frequency components in facial movements and preserve a temporal coherency in the animation, we use a resolution-preserving fully convolutional neural network that maps control parameters to blendshapes coefficients sequences. We stack an additional resolution-preserving animation autoencoder after the regressor to ensure that the system outputs natural-looking animation. The proposed system is robust and can handle coarse, exaggerated edits from non-specialist users. It also retains the high-frequency motion of the facial animation. The training and the tests are performed on an extension of the B3D(AC)\^{}2 database \cite{fanelli_3-d_2010}, that we make available with this paper.

Dataset

The database has been created using the 3-D Audio-Visual Corpus of Affective Communication and can be obtained upon request, for research purposes only. To obtain the data, please send a mail at research (at) dynamixyz.com providing your name, your organization and your research interest.

Referencing the database in your work

- - E. Berson, V. Barrielle, C. Soladié, N. Stoiber, "A Robust Interactive Facial Animation Editing System", Proceedings of the 12th Annual International Conference on Motion, Interaction, and Games, pp. 26:1–26:10

- - G. Fanelli, J. Gall, H. Romsdorfer, T. Weise, L. Van Gool, "A 3-D Audio-Visual Corpus of Affective Communication", IEEE Transactions on Multimedia, Vol. 12, No. 6, pp. 591 - 598, 2010.